of Serious and Organised Crime

The increasing reliance on the internet to manage social, economic, and business operations is almost certainly expanding opportunities for the criminal exploitation and infiltration of online infrastructure. Increasing public and business resilience is crucial for mitigating SOC threats to the public.

The use of social engineering increases offenders’ capability to coerce, deceive, and extort individual victims and organisations online. User-to-user networking platforms facilitate mass discoverability, allow unmoderated and/or encrypted private messaging, and use algorithms to encourage connections and to generate engagement. This enables offenders to locate, identify, and connect with victims, and exploit their online data and personal information.

Online offenders are increasingly capable of identifying and targeting victims across a range of criminal threats, with individuals and networks identified operating across child sexual abuse, cybercrime, and fraud, targeting both individuals and organisations. It is almost certain that the motivations for offending are also increasingly diverse, with factors such as notoriety and status driving the participation of young offenders in online networks where offending and harm is perpetrated. It is highly likely that the gamification of offending within such networks enables offenders to distance themselves from the harms and consequences of their actions.

Interpersonal Harms

Women and children are disproportionately impacted by sexually motivated online offending. Online-facilitated child sexual abuse now accounts for at least 42% of recorded child sexual abuse offences in England and Wales. In 2025, the Child Sexual Abuse and Exploitation Referrals Bureau received over 92,000 referrals from online platforms, representing a 31% increase on the previous two years.

Women and children are disproportionately impacted by sexually motivated online offending. Online-facilitated child sexual abuse now accounts for at least 42% of recorded child sexual abuse offences in England and Wales. In 2025, the Child Sexual Abuse and Exploitation Referrals Bureau received over 92,000 referrals from online platforms, representing a 31% increase on the previous two years.

Offenders target both children and non-consenting adults, operating as individuals, or in online networks to share and sell illegal sexual content online, and in some cases organise and coordinate sexual offending offline.

Offenders use the online environment to cause sexual, physical, and mental harm to victims remotely, which likely decreases offenders’ inhibitions and increases the severity of offending. Offenders exploit poverty abroad, paying for child sexual abuse to be produced and livestreamed. Victims worldwide are also groomed, coerced, and extorted to record and share self-generated indecent imagery of sexual acts, acts of self-harm, and/or harm to others. This can often involve escalatory demands to engage in increasingly harmful activity, and offenders may also make demands to meet victims offline, placing them at risk of contact offending.

It is likely that children are increasingly being financially incentivised to produce self-generated indecent imagery, mirroring online influencers on adult sites such as OnlyFans. Whilst almost certainly less common than the scale of grooming or coercion by offenders, this is almost certainly under-identified.

Male victims are almost certainly more susceptible to, and are more commonly victims of, financially motivated sexual extortion. Offenders engage both adult and child victims in the UK. Reports of financially motivated sexual extortion impacting children under 18 increased between 2024 and 2025; it is highly likely media campaigns to raise awareness of the issue have contributed to an increase in reporting. Where financially motivated sexual extortion activities target children, it is highly unlikely that this is as result of a sexual interest in children by these offenders, but rather children’s vulnerability to this model of offending.

Child sexual abuse offenders identify and target children on popular online networking and communication, livestreaming, and gaming platforms. Over the last three quarters of 2025, the Child Exploitation and Online Protection Centre received approximately 500 reports per month, a 50% increase driven primarily by increases in financially motivated sexual extortion and online grooming. Features of online platforms that increase the risk of children being targeted by offenders and exposed to harmful material, trends, and behaviours include:

- lacking effective moderation processes and/or requiring limited sign-up information;

- allowing discoverability of children via public profiles and friend suggestions;

- providing seamless movement between public and closed messaging spaces, particularly where those messaging services are end-to-end encrypted and allow private interactions between children and unknown adults;

- hosting unverified and/or unmoderated user-generated gaming and content; and

- featuring algorithms and/or chatbots that promote potentially harmful content, and reinforce potentially harmful user inputs and interests.

Implementation of the Online Safety Act 2023 requires providers to remove illegal content, provide highly effective age assurance, and consider how algorithms impact children’s exposure to harmful content. Online platforms’ adoption of the Act’s requirements are likely to embed and mature over the next 12 months, impacting offender capabilities to target children online and reducing children’s exposure to harmful content. However, this is dependent on platforms successfully implementing and demonstrating safety by design at scale.

Implementation of the Online Safety Act 2023 requires providers to remove illegal content, provide highly effective age assurance, and consider how algorithms impact children’s exposure to harmful content. Online platforms’ adoption of the Act’s requirements are likely to embed and mature over the next 12 months, impacting offender capabilities to target children online and reducing children’s exposure to harmful content. However, this is dependent on platforms successfully implementing and demonstrating safety by design at scale.

Offenders use social media platforms and classified advertising websites to offer UK employment opportunities, recruiting potential victims of modern slavery and human trafficking in source countries into sexual and labour exploitation, domestic servitude, and exploitation in criminal activity. Victims are commonly deceived about the nature of the work they will be required to undertake, and the terms and conditions under which they will be forced to work.

Financial Harm to Individuals and Organisations

The online ecosystem provides tools, products, and services that allow offenders to target victims at scale for online and offline fraudulent activity. Fraud-enabling products include compromised datasets, phishing kits, malware, one-time password interception tools, scripts, and tutorials for cybercrime offences.

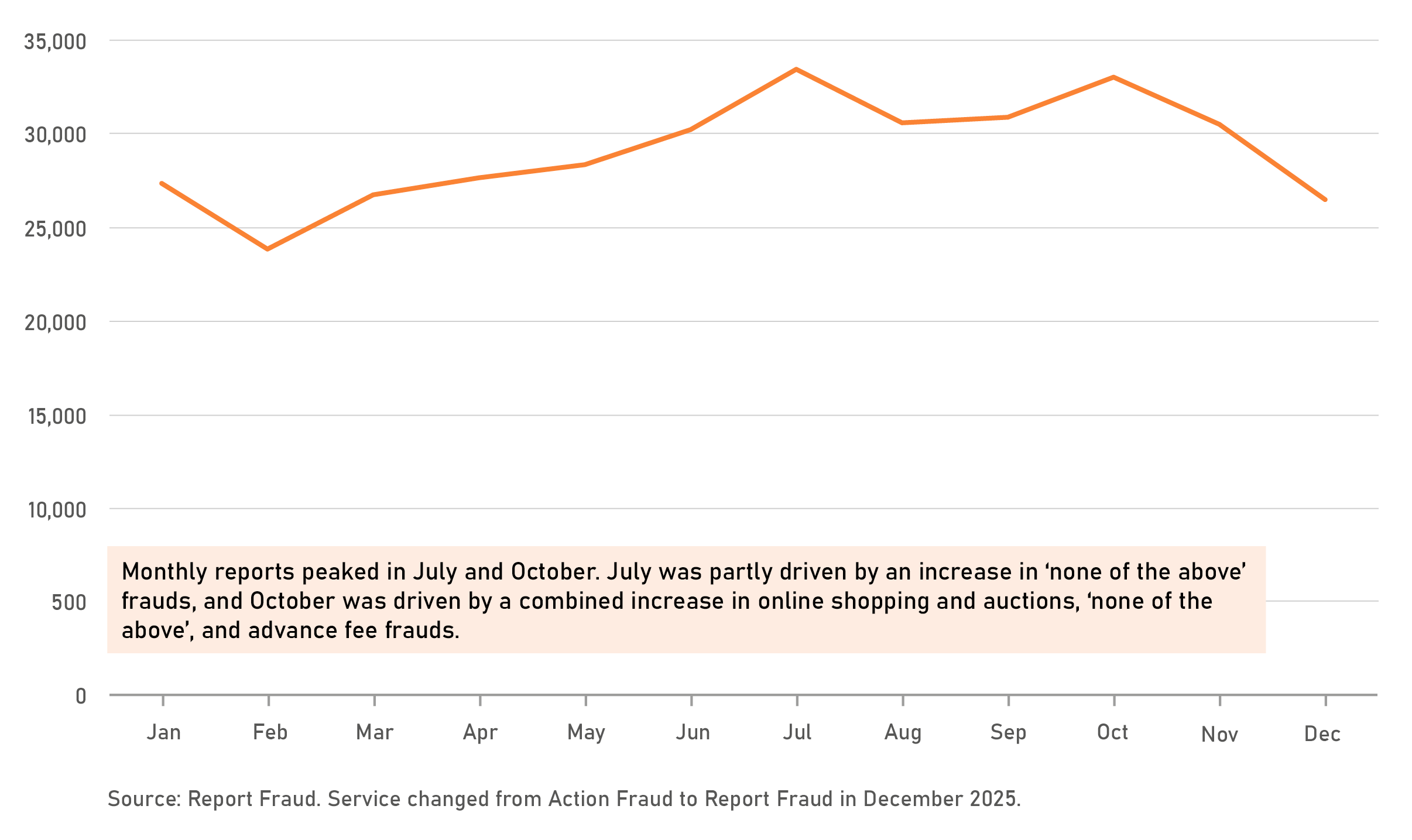

Although incidents submitted to Report Fraud in 2025 peaked in both July and October, there is no evidence of a substantial change in the overall threat. Monthly reports later declined from October onwards and returned back to the scale identified at the beginning of 2025. However, growing levels of card-not-present and investment fraud are being facilitated by online environments. Criminals exploit online advertisements to help them target large numbers of individuals, which is likely to be a factor behind rising investment fraud losses. Additionally, compromised data purchased from online marketplaces is increasingly being monetised via card-not-present fraud, with cases rising by 22% from 1.36 to 1.66 million during the first half of 2025 compared to the first half of 2024, according to UK Finance.

Ransomware remains the highest-harm serious and organised cybercrime threat impacting the UK, and the impact on organisations has been well documented in the UK in 2025. Successful ransomware attacks impacting Collins Aerospace, Co-op, Jaguar Land Rover, and Marks & Spencer demonstrate both the financial cost to businesses of ransomware deployments, and, also, the impact on the day-to-day lives of British citizens, including customers, workers in the supply chain, and the wider economy and tax base. Reporting estimates a cost to the UK economy of £1.9 billion, including a tangible impact on UK gross domestic product from the Jaguar Land Rover attack, which is estimated to be the costliest ransomware attack ever in the UK.

Cybercriminals from English-speaking nations, including the UK, have been involved in attacks that used social engineering techniques to gain access to victims’ credentials and systems, where their native use of the English language and understanding of their victims gives them an advantage. They have also made use of different forms of ransomware, demonstrating their use of tools developed by other cybercriminals.

Artificial Intelligence

The increased functionality, scale, and development of artificial intelligence tools and their adoption by offenders continues to enhance and enable SOC. Generative artificial intelligence imagery is increasingly realistic and indistinguishable from real imagery, and is being used to produce child sexual abuse material. Artificial intelligence-generated false documentation has been identified being used to circumvent identity checks, facilitating fraud, money laundering, and organised immigration crime offending.

In 2025, the Internet Watch Foundation identified 312,030 reports where analysts confirmed the presence of child sexual abuse material. This is a 7% increase on the 291,730 reports in 2024, with increasing levels of photo-realistic artificial intelligence-generated child abuse imagery contributing to this rise. Of 3,440 artificial intelligence videos of child sexual abuse discovered by the Internet Watch Foundation in 2025, 65% (2,230) were categorised as Category A, suggesting a trend towards artificial intelligence-generated child sexual abuse material being more extreme.

The use of artificial intelligence automation and translation tools is reducing language barriers, increasing opportunities for victim targeting, and making it easier for organised crime groups to collaborate internationally. The expansion of victim pools via the use of translation tools and better-quality scripts reduces victims’ ability to recognise and identify fraudulent activity, increasing the likelihood that offenders will be able to socially engineer and extract victim data and credentials. The use of artificial intelligence to automate fraudulent emails enables offenders to tailor content at scale, helping to bypass filters designed to detect malicious communications. There continue to be incremental improvements in the abilities displayed by artificial intelligence-generated malware.

Case Study | The Sanctuary | Disrupting Online Child Sexual Exploitation

Following the launch of an investigation by the United States of America's Federal Bureau of Investigation into a private online messaging forum dedicated to the sexual exploitation of children named ‘The Sanctuary’, a UK-based IT worker, Robert Chown (49), was identified as a key contributor. From his home in South London, Chown masqueraded as a teenage boy online to target thousands of children worldwide, coercing them to engage in being filmed or photographed committing non-consensual sexual acts.

Girls and boys as young as six years old were groomed by Chown to livestream sexual acts at his instruction, which he would capture and share with other paedophiles on ‘The Sanctuary’ and the dark web. He went on to share hundreds of abuse images in the forum that he’d captured over years of sexually exploiting children online. Chown also posted an indecent photo of a 12-year-old girl that he had taken in person, who was subsequently identified and safeguarded by the NCA and child protection services. Investigators seized two mobile devices, and found over 2,000 indecent images and videos of children in categories A to C. They also discovered 204 entries into Google Translate of sexual instructions translated from English into Russian and Polish.

Following an NCA investigation, Chown was sentenced to 25 years, with seven to be served on licence, having pleaded guilty to 41 charges at a previous court hearing. Chown was also handed a lifetime Sexual Harm Prevention Order and will be on the sex offenders register for the rest of his life.

Fraud Incidents Submitted to Report Fraud in 2025